FacialAnnotation

FacialAnnotation

Only in Wrap4D

The node is used to annotate facial features on the neutral mesh. The annotation includes eyelid and lip contours as well as selections of different parts of the lips.

The node provides input data for the FacialWrapping node. This data helps Wrap to understand where certain parts of the face a located on a given base mesh.

Contours

The annotation of the contours is done in 2D camera space using splines. The spline points are then projected through the camera onto a mesh.

The contours consist of semantic and extra points.

Semantic points are points that you can easily find on the mesh texture. Any painted marker or a line on the actor’s lips or eyelids is a semantic point. The number of semantic points is constant for a given scanning session. Lip and eyelid corners are always semantic points. The number of semantic points in the FacialAnnotation node should match the number of semantic points tracked on the actor’s face in Track. To achieve that one can import contours from a detection file exported from Track using Import from Detection button.

Extra points are only used to adjust the shape of the contour. The number of extra points can be arbitrary. Extra points are resampled before passing to the node output so it doesn’t matter how many extra points you are using as long as the contour shape is accurate and correct.

The contour annotation should closely match the annotation of the neutral frame in Track.

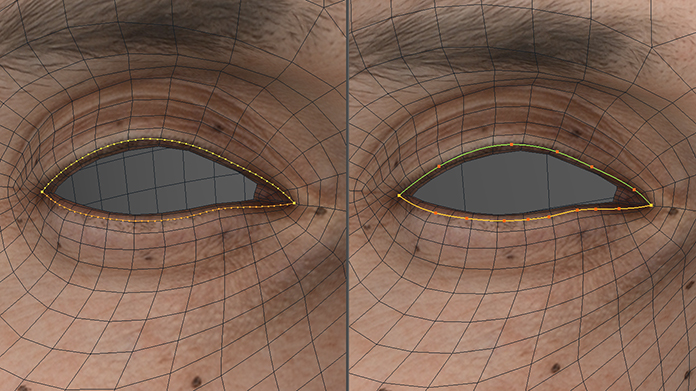

Proper annotation of eyelid contours is very important especially for further wrapping of “eye-closed” expressions. For the eye closing to happen properly we recommend that the annotated contour closely matches the edge loop corresponding to the eyelash attachment line. Please make sure that not only the contour on the left but also its projection on the right accurately matches the corresponding edge loop. Even small deviations in the annotation of the eyelids may cause noticeable artifacts on the eyelid contours during wrapping so please pay a lot of attention to this step.

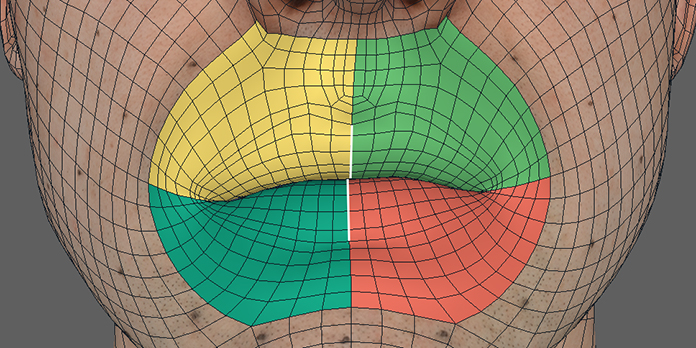

Polygons Selections

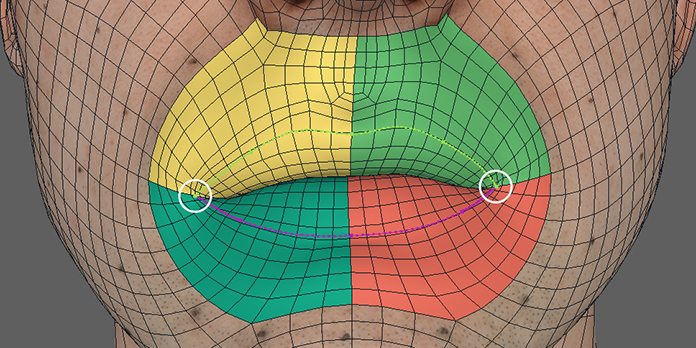

Polygon selections are used to annotate different regions on the lips. These selections are used when fitting the inner contours of the lips.

You need to annotate the following parts:

upper right lip

upper left lip

lower right lip

lower left lip

mouth socket

Note

All the parts are named relative to the actor (i.e anatomically).

The selections should not overlap.

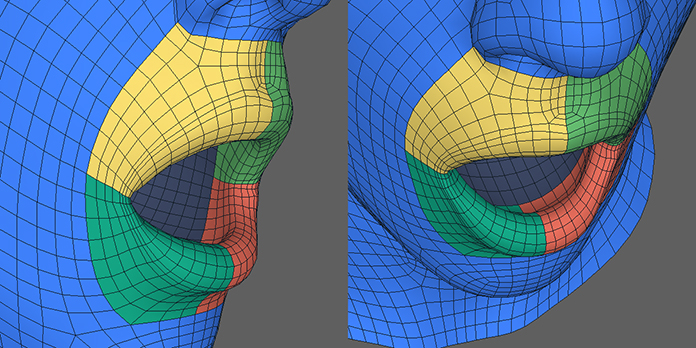

The inner border of the lip selections should roughly match the base of the gum. The outer border should be wide enough so that it’s not occluded by the lips during extreme expressions.

It is very important that both corners of the lip contours lay exactly on the border between upper and lower lip selections.

The borders between the left and the right lip selections are used to detect the centers of the inner lip contours. Make sure that these borders correspond to anatomical centers of the lips of the actor.

Editor

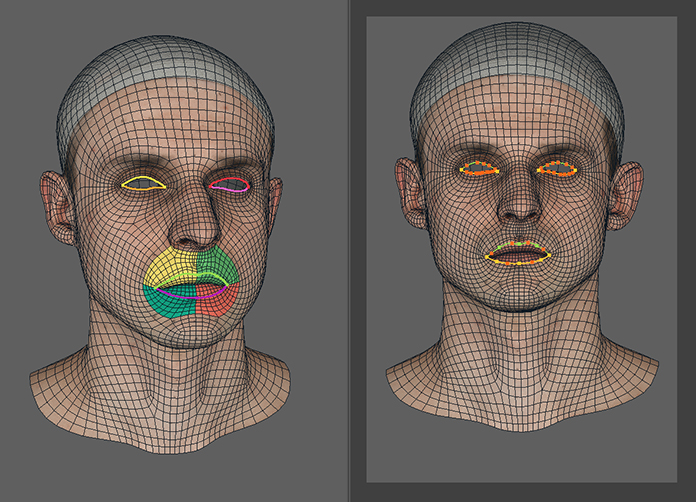

FacialAnnotation node has a visual editor with two viewports.

The left viewport is used to preview the results of the projection of the lip and the eyelid contours through the camera onto the model in 3D space. It also shows polygon selections for different lip parts.

The right viewport displays the model through the camera view and allows editing contours in 2D space.

Spline Editing

To edit a contour shape just click and drag any point on the spline.

Click on an empty area on the spline to create an extra point. CTRL click on an extra point to remove it.

LMB |

to add a new point |

CTRL + LMB |

to remove a point |

Click and drag on any point |

to move it |

Inputs

- Geometry

GeometryGeometry to annotate- Camera

CameraA camera that is used to project 2D contours onto the mesh in 3D- Upper Right Lip Selection

PolygonSelectionSelection of the upper right part of the lips (relative to the actor)- Upper Left Lip Selection

PolygonSelectionSelection of the upper left part of the lips (relative to the actor)- Lower Right Lip Selection

PolygonSelectionSelection of the lower right part of the lips (relative to the actor)- Lower Left Lip Selection

PolygonSelectionSelection of the lower left part of the lips (relative to the actor)- Mouth Socket Selection

PolygonSelectionSelection of the mouth socket

Output

FacialAnnotationFacial annotation

Parameters

- Use Camera Resolution:

if set, the camera resolution will be used for projection

- Camera Resolution:

camera width and height that is used for projection

- Show Polygon Selection:

if set, the polygon selections are displayed in the left viewport

- Create New:

opens a dialog for creating a new annotation with custom semantic points count

- Import from Detection:

imports contours from a detection JSON file. This file is usually exported from Track. We highly recommend to always start an annotation by importing it from detection. This way you can be sure that you are using the same number of semantic points as was used for detection

- Export:

saves facial annotation into a JSON file

- Reset:

resets contours to default